I have highlighted the Electronic Frontier Foundation (EFF) and it great work on this website on many occasions. The organization has been at the forefront of many privacy and civil liberties related issues, including the increasing use of drones by the U.S. government domestically, unconstitutional NSA spying, as well as a host of other issues.

The latest article from them that caught my attention was published a couple of days ago, and shines light on the disturbing push by the FBI to create an extensive facial recognition database, which will include criminal and non-criminal photos alike. The information received by the EFF via a Freedom of Information Act (FOIA) request, demonstrates that the feds may have a mugshot database with up to 52 million photos by 2015.

The program is called Next Generation Identification (NGI), and the aspect of it that bothers the EFF most is the fact that non-criminal and criminal photos will be combined in the same database. So someone who has no criminal record can suddenly be flagged as a suspect just because an algorithm says so. What’s worst, research shows that the potential for false positive identification increases as the dataset increases.

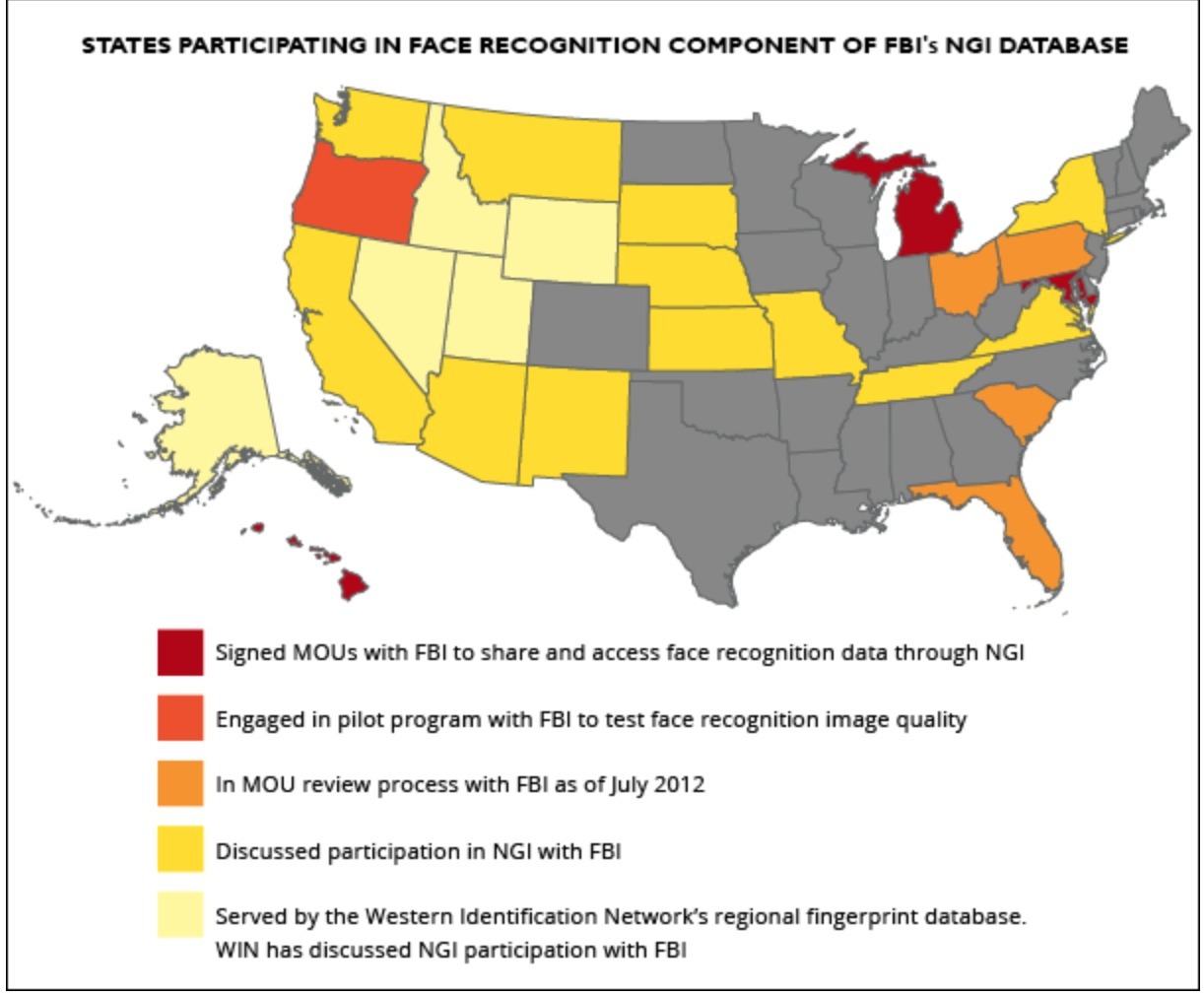

To see if your state is participating, take a look at this map courtesy of the EFF.

More from the EFF:

New documents released by the FBI show that the Bureau is well on its way toward its goal of a fully operational face recognition database by this summer.

EFF received these records in response to our Freedom of Information Act lawsuit for information on Next Generation Identification (NGI)—the FBI’s massive biometric database that may hold records on as much as one third of the U.S. population. The facial recognition component of this database poses real threats to privacy for all Americans.

NGI builds on the FBI’s legacy fingerprint database—which already contains well over 100 million individual records—and has been designed to include multiple forms of biometric data, including palm prints and iris scans in addition to fingerprints and face recognition data. NGI combines all these forms of data in each individual’s file, linking them to personal and biographic data like name, home address, ID number, immigration status, age, race, etc. This immense database is shared with other federal agencies and with the approximately 18,000 tribal, state and local law enforcement agencies across the United States.

One of our biggest concerns about NGI has been the fact that it will include non-criminal as well as criminal face images. We now know that FBI projects that by 2015, the database will include 4.3 million images taken for non-criminal purposes.

Currently, if you apply for any type of job that requires fingerprinting or a background check, your prints are sent to and stored by the FBI in its civil print database. However, the FBI has never before collected a photograph along with those prints. This is changing with NGI. Now an employer could require you to provide a “mug shot” photo along with your fingerprints. If that’s the case, then the FBI will store both your face print and your fingerprints along with your biographic data.

In the past, the FBI has never linked the criminal and non-criminal fingerprint databases. This has meant that any search of the criminal print database (such as to identify a suspect or a latent print at a crime scene) would not touch the non-criminal database. This will also change with NGI. Now every record—whether criminal or non—will have a “Universal Control Number” (UCN), and every search will be run against all records in the database. This means that even if you have never been arrested for a crime, if your employer requires you to submit a photo as part of your background check, your face image could be searched—and you could be implicated as a criminal suspect—just by virtue of having that image in the non-criminal file.

It is unclear what happens when the “true candidate” does not exist in the gallery—does NGI still return possible matches? Could those people then be subject to criminal investigation for no other reason than that a computer thought their face was mathematically similar to a suspect’s? This doesn’t seem to matter much to the FBI—the Bureau notes that because “this is an investigative search and caveats will be prevalent on the return detailing that the [non-FBI] agency is responsible for determining the identity of the subject, there should be NO legal issues.”

Finally, even though FBI claims that its ranked candidate list prevents the problem of false positives (someone being falsely identified), this is not the case. A system that only purports to provide the true candidate in the top 50 candidates 85 percent of the time will return a lot of images of the wrong people. We know from researchers that the risk of false positives increases as the size of the dataset increases—and, at 52 million images, the FBI’s face recognition is a very large dataset. This means that many people will be presented as suspects for crimes they didn’t commit. This is not how our system of justice was designed and should not be a system that Americans tacitly consent to move towards.

Full article here.

In Liberty,

Michael Krieger

Donate bitcoins: 35DBUbbAQHTqbDaAc5mAaN6BqwA2AxuE7G

Follow me on Twitter.

Criminals – hell the banksters will all be there with their profiles, ties and all but we all know that they will have a special folder for these dudes.

mike, thanks,. this was very informative.